Microphones in upcoming Macs, iPhones, iPads, and Apple Watches could differentiate between natural and artificial sounds per a new Apple patent filing (number 20200090644) for “systems and methods for classifying sounds.” The goal: to improve the performance of Siri, the company’s “virtual digital assistant.”

In the patent filing, Apple notes that consumer electronic devices such as laptops, desktop computers, tablet computer, smart phones, and smart speakers are often equipped with virtual assistant programs such as Siri that are activated in response to having detected a trigger sound (such as “Hey, Siri”). In a home environment and other environments, some sounds may originate naturally, like a person speaking or a door slamming, while other sounds originate from an artificial source like the speakers of a television or radio (also referred to as playback sounds.)

Apple says it’s important for the virtual assistant program to be able to discriminate between natural sounds and artificial sounds. For example, if Siri is to alert emergency services when a person’s calls for help are detected, it’s important to know whether the detected speech is from a real human present in the room or whether the detected speech is part of a movie being watched in the room (e.g., to prevent false positives). Apple wants to address the need for Siri to be able to classify natural and artificial sounds and address other related and non-related issues/problems in the art.

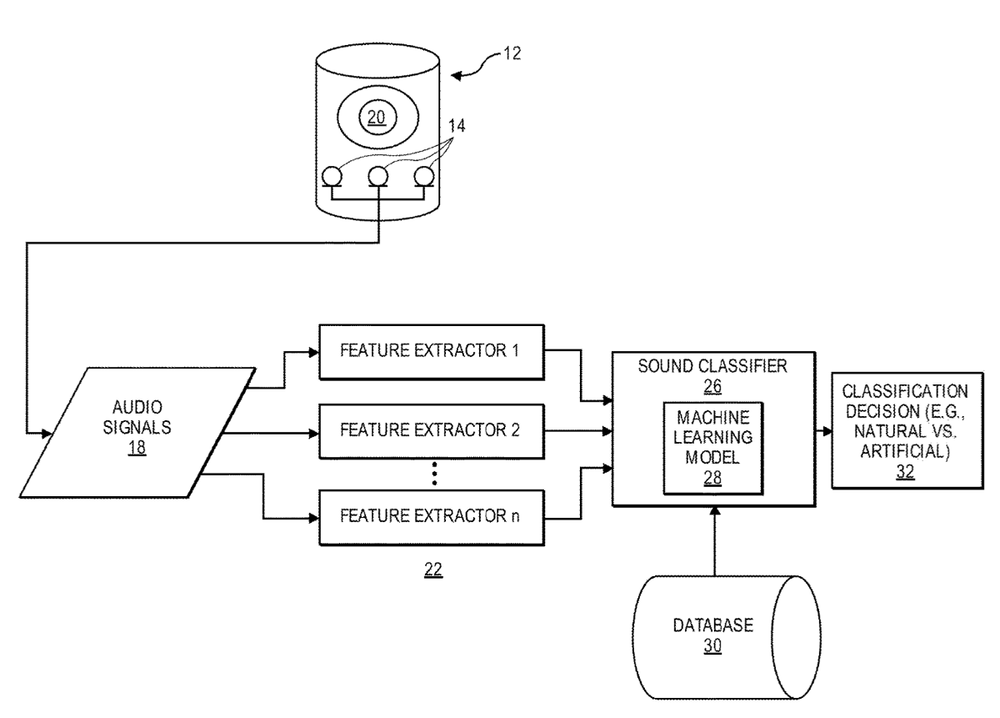

Here’s the summary of the patent filing: “An electronic device has one or more microphones that pick up a sound. At least one feature extractor processes the audio signals from the microphones, that contain the picked up the sound, to determine several features for the sound.

“The electronic device also includes a classifier that has a machine learning model which is configured to determine a sound classification, such as artificial versus natural for the sound, based upon at least one of the determined features. Other aspects are also described and claimed.”