Apple has filed for a patent (number 20210141525) for “disambiguation of multitouch gesture recognition for 3D interaction.”

It involves multitouch devices such as the iPhone and iPad that allow users to interact with displayed information using gestures that are typically made by touching a touch-sensitive surface with one or more fingers or other contact objects such as a stylus. Per the patent filing, the number of contact points and the motion of the contact point(s) are detected by the multitouch device and interpreted as a gesture, in response to which the device can perform various actions.

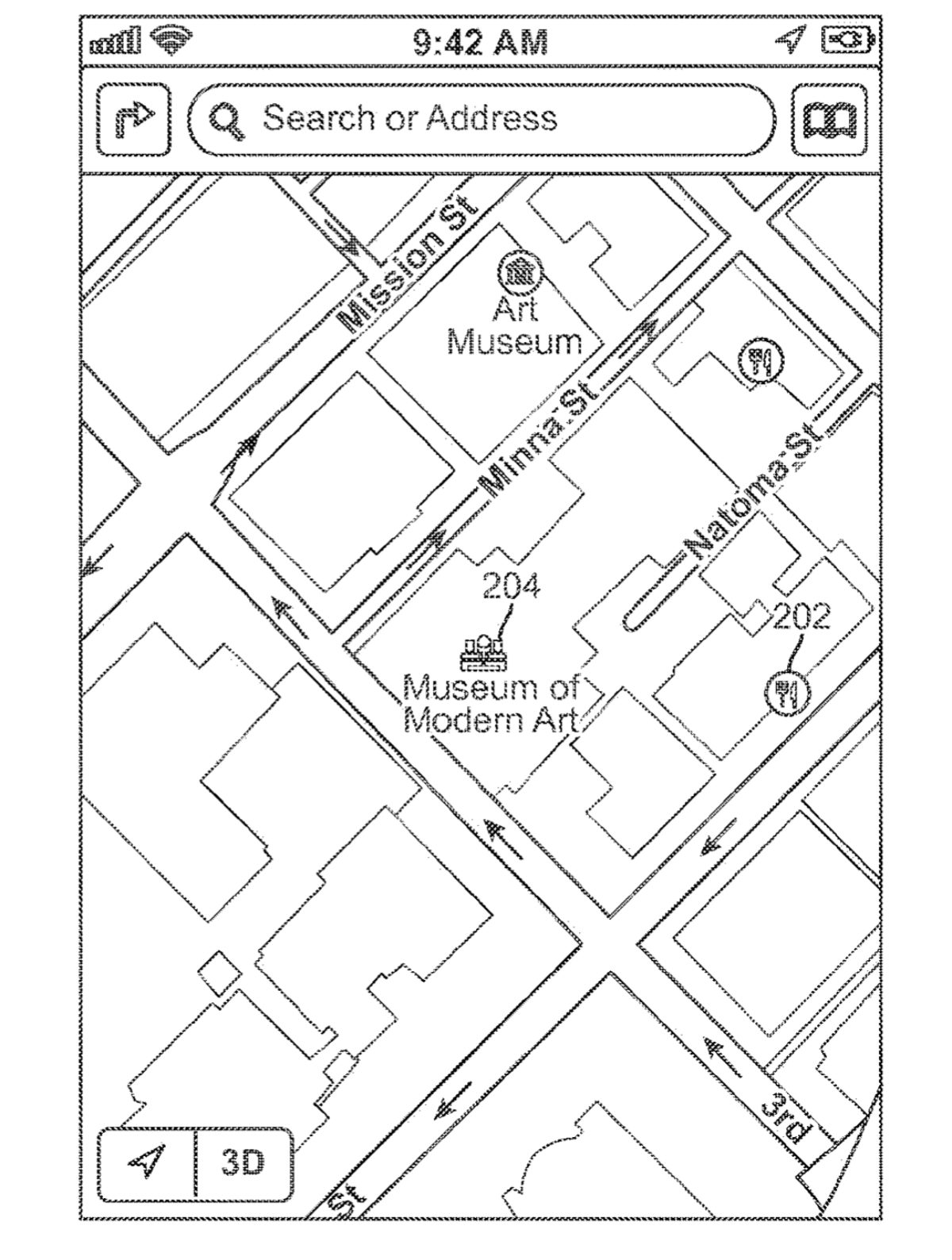

For example, when interacting with a displayed image depicting a 3D region (such as a map), you may want to pan the image to see a different portion of the region, zoom in or out to see greater detail or a larger portion of the region, and/or rotate or tilt the image to view the region from different angles.

Or you may want to make a single adjustment or to freely adjust multiple viewing parameters at once, such as zooming while panning or rotating. Per Apple’s patent filing, an iPhone or iPad could include a sensor to detect your gestures and use software-enabled “interpretation logic” to translate a detected gesture into one or more commands to modify a displayed image.

Here’s the summary of the patent filing: “A multitouch device can interpret and disambiguate different gestures related to manipulating a displayed image of a 3D object, scene, or region. Examples of manipulations include pan, zoom, rotation, and tilt. The device can define a number of manipulation modes, including one or more single-control modes such as a pan mode, a zoom mode, a rotate mode, and/or a tilt mode. The manipulation modes can also include one or more multi-control modes, such as a pan/zoom/rotate mode that allows multiple parameters to be modified simultaneously.”

2 Comments

Comments are closed.